Category | Quality Management

Last Updated On 06/02/2026

AI projects are moving faster than governance teams can keep up. Models are deployed, retrained, and reused long before policies are updated or risks are fully understood. This growing gap is exactly why ISO 42001 compliance challenges are becoming harder to manage as 2026 approaches.

In recent AI governance training and audit-readiness workshops, many organizations report that their biggest challenge is not understanding ISO 42001, but keeping governance aligned with rapidly changing AI models already in production.

By 2026, pressure is rising from multiple directions. The EU AI Act, sector-specific regulations, customer expectations, and public scrutiny of automated decisions are all converging. Together, they are exposing real AI governance risks that many organizations are not fully prepared for.

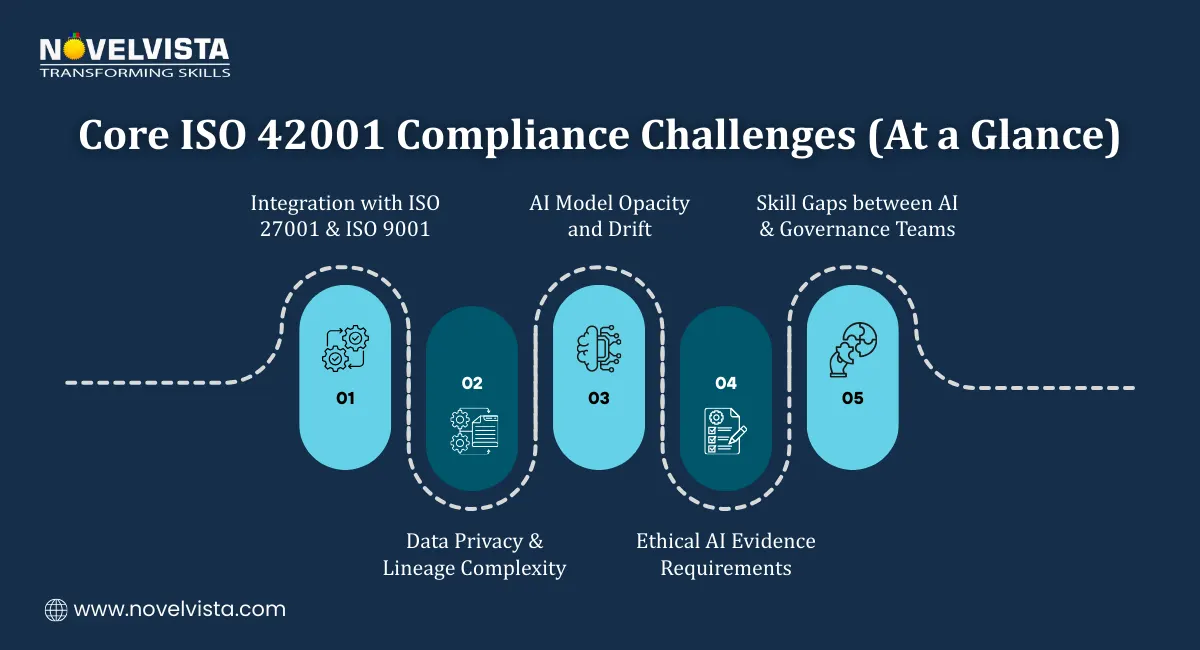

This article breaks down the most common ISO 42001 compliance challenges, why ISO 42001 implementation issues keep surfacing, and how ethical AI framework challenges complicate governance. The goal is not theory, but clarity on what actually makes compliance difficult in real environments.

One major reason ISO 42001 implementation issues appear early is that AI systems are not static. Unlike traditional management systems that control processes, ISO 42001 must govern learning systems that evolve over time.

AI models change due to:

Policies, controls, and risk assessments often struggle to keep pace. As a result, governance intent looks strong on paper, while operational reality tells a different story. This mismatch is at the heart of many ISO 42001 compliance challenges.

Another issue is lifecycle complexity. AI systems have longer and more interconnected lifecycles than most organizations expect. Data sourcing, model development, deployment, monitoring, retirement, and reuse all introduce risk points. When these are not clearly governed, AI governance risks multiply quietly.

Many organizations also assume existing ISMS or QMS controls can simply be reused. While integration is possible, ISO 42001 implementation issues arise when AI-specific risks, such as bias, explainability, and autonomous decision-making, are treated as extensions of IT or quality risks instead of distinct governance concerns.

Apply ethical AI controls without confusion. Use a clear, practical checklist to ensure fairness, transparency, accountability, privacy, and human oversight, aligned with ISO 42001 expectations.

One of the most visible ISO 42001 compliance challenges is integration. Organizations already certified to ISO 27001 or ISO 9001 often try to “plug in” ISO 42001 without redesigning governance structures.

Common problems include:

During early ISO 42001 readiness assessments, auditors frequently observe that AI risks are discussed but not formally owned. This gap between discussion and accountability is a leading cause of delayed implementation.

AI system complexity is another major source of ISO 42001 compliance challenges. Deep learning models and black-box algorithms make transparency and traceability difficult to demonstrate.

Auditors often see issues such as:

Monitoring AI systems is not a one-time task. Performance degradation, bias re-emergence, and unintended outcomes require ongoing review. When organizations treat these controls as static, ISO 42001 implementation issues surface quickly.

This complexity increases audit pressure and magnifies AI governance risks, especially for high-impact or regulated use cases.

A less visible but equally serious contributor to ISO 42001 compliance challenges is the shortage of skilled professionals. Governing AI requires people who understand both technical systems and governance standards.

Many organizations face:

Many organizations underestimate the learning curve involved in AI governance. Teams with strong technical skills often lack audit awareness, while compliance teams struggle to evaluate model behavior and lifecycle risks. These gaps are a recurring cause of ISO 42001 implementation issues and unresolved ethical AI framework challenges.

Data remains the foundation of AI, and it is also one of the biggest risk areas. Ensuring GDPR-compliant data handling, anonymization, and consent tracking is still a struggle for many organizations.

Typical findings include:

These weaknesses directly increase AI governance risks and make ISO 42001 compliance challenges harder to close, especially under regulatory scrutiny.

Ethical AI requirements are where many organizations feel the most pressure. Bias, fairness, explainability, and accountability are easy to discuss but hard to prove.

Some common ethical AI framework challenges include:

Auditors often find that ethical principles are documented but not operationalized. Transparency exists in policy statements, but not in system behavior. This gap creates recurring ISO 42001 implementation issues.

Regulators and certification bodies increasingly expect ethical AI controls to be measurable and auditable. High-level ethics statements alone are no longer sufficient evidence of responsible AI governance.To manage these ethical AI challenges effectively, explore how the ISO 42001 framework provides clear governance and control across the AI lifecycle.

As ISO 42001 audits increase, certain AI governance risks appear repeatedly across industries. These risks often exist long before formal audits begin, but they only become visible when governance is tested.

One of the most common ISO 42001 compliance challenges is unclear ownership of AI risks. Responsibilities under Annex A are often fragmented across IT, data science, legal, and compliance teams.

Typical audit observations include:

When accountability is unclear, ISO 42001 implementation issues escalate. Decisions get delayed, controls are inconsistently applied, and risk treatment actions stall. This fragmentation significantly increases AI governance risks, especially for high-impact AI systems.

Vendor-supplied AI tools and SaaS platforms introduce risks that many organizations underestimate. These third-party systems often operate outside internal governance frameworks.

Auditors frequently identify:

These blind spots amplify ISO 42001 compliance challenges and expose organizations to regulatory, ethical, and reputational risks. Shadow AI, in particular, bypasses governance entirely, making it one of the fastest-growing AI governance risks heading into 2026.

AI governance cannot succeed in isolation. Yet many audits reveal resistance from engineering teams or business units that view governance as an obstacle rather than protection.

Common findings include:

This lack of alignment weakens controls and leads to recurring ISO 42001 implementation issues. Without leadership support, ethical AI framework challenges remain unresolved and risks continue to grow.

While the challenges are real, they are not unmanageable. Organizations that address ISO 42001 compliance challenges early tend to progress faster and with fewer audit surprises.

Practical actions include:

ISO 42001 allows flexibility. Phased implementation helps reduce pressure and makes ISO 42001 implementation issues easier to control ahead of the 2026 compliance surge.

Ignoring ISO 42001 compliance challenges does not delay risk, it compounds it. Organizations that postpone governance face higher remediation costs, audit findings, and reputational exposure later.

Mature AIMS implementations:

Addressing ethical AI framework challenges early also improves internal confidence. Teams understand expectations, controls become practical, and governance shifts from theory to daily operations.

ISO 42001 compliance challenges reflect the reality of governing complex, evolving AI systems. These challenges are not a sign of failure, they signal where governance must mature.

ISO 42001 implementation issues can be managed with realistic planning, strong ownership, and cross-functional alignment. Ethical AI framework challenges reinforce the need for continuous oversight rather than one-time controls.

As AI regulations mature globally, ISO 42001 is increasingly viewed as a foundational governance framework rather than an optional certification, particularly for high-impact and regulated AI use cases.

Organizations that treat ISO 42001 as a living system, not a checklist, will be better prepared for audits, regulation, and long-term trust in AI-driven decisions.

Author Details

Course Related To This blog

ISO 42001 Lead Auditor

Confused About Certification?

Get Free Consultation Call

Stay ahead of the curve by tapping into the latest emerging trends and transforming your subscription into a powerful resource. Maximize every feature, unlock exclusive benefits, and ensure you're always one step ahead in your journey to success.